AI and Your App

Artificial Intelligence 2

This is a bonus lesson that will expand your knowledge about AI and how it works. It will not be a scored part of your submission but it could be helpful to learn how you could integrate AI with your app. If you need a refresher on how AI works, check out the other AI lesson in Week 2: All About AI.

In this lesson, you will…

- Consider the ethics of AI

- Decide if AI is right for your app

- Learn how to integrate some AI elements with App Inventor and Thunkable

Key Terms and Concepts

- Ethics - a set of moral principles that affect how people decide what’s right or wrong

- Bias - preconceived ideas somebody has that are often unfair to some people or groups

We’ll be taking a deeper dive into AI application and your app today. If you need a refresher, review Artificial Intelligence 1: All About AI.

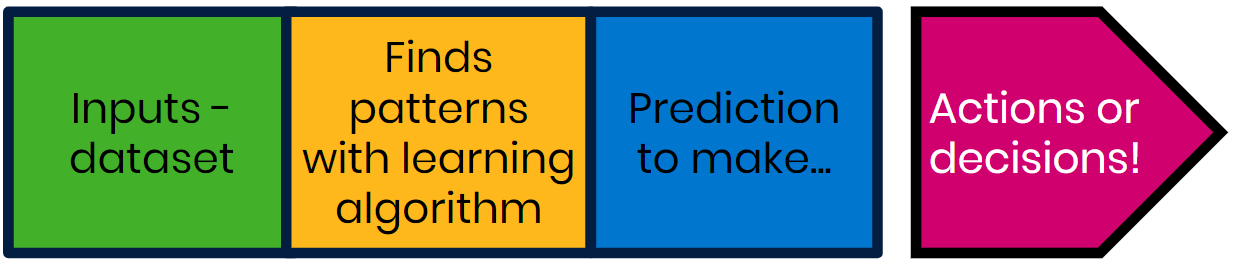

Most importantly, remember the 4 basic parts of AI:

If you need an example, check out this video from our friends at Google that shows how someone used AI to act on a problem:

Google and Technovation also held a workshop on an AI tool called Dialogflow that you can check out in the additional resources section!

By this point, you likely have ideas about what you want your app to do. Will AI be important for your app? Let’s consider a few more things before we answer that.

Ethics and Actions

Ethics is a part of philosophy that has to do with what is right or wrong. This is an important topic in the world of AI today for many reasons! You’ll want to make sure your app is making decisions that help people and society instead of causing harm, even if the harm is by accident. Here is a story from Joy Buolamwini about her experiences with technological bias.

Put yourself in her shoes, how would you respond to the findings? If you were the inventor, would you feel comfortable releasing similar technology? How can we make technology more fair and representative of all kinds of people?

One place to start is by thinking about “healthy” data. Removing bias in your data helps make your model healthier, so that it can make better decisions.

We’ve already learned about some details around bias in training data, here’s a video that outlines more:

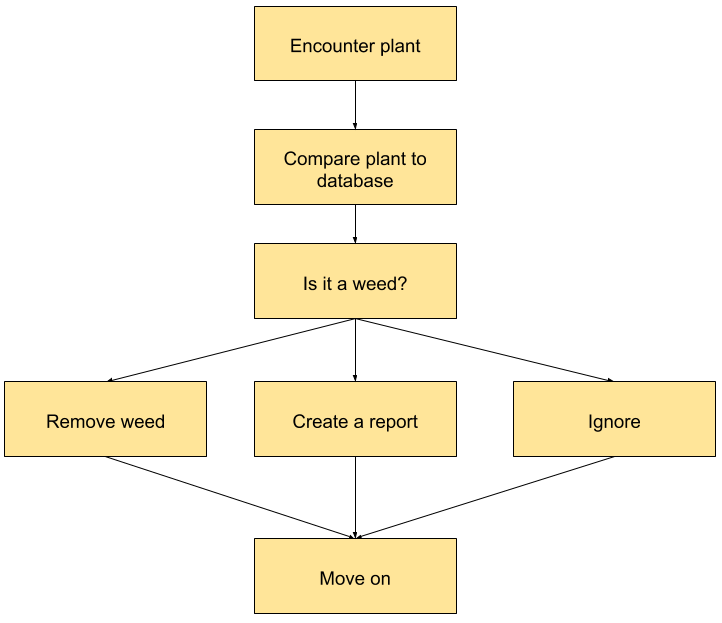

The Weed Puller

But data isn’t the only area in AI in which we can think about ethics . We can also think about the ethics of the use or the results of the AI. Imagine an AI technology called the Weed Puller that predicts what plants are weeds and pulls them out. Using the Weed Puller as a base, consider the following:

This may be a lesson focused on AI, but these ethical questions are applicable to your entire app.

As creators, we bear responsibility on how our technology interacts with people and we should always be mindful of the potential impact our creations can have.

Which solutions need AI?

Not every problem requires technology or AI. Think about what sort of problems are best suited for AI such as:

- Situations that are not programmable (ex - Google Maps)

- Situations that can predict something when given new information (ex- when given a new x-ray, an AI tech could identify cancer)

- Often connected to actions (ex - smart vacuum)

Carefully consider if your app should use AI. Does it add significant value to your core design functions?

AI Features in App Inventor and Thunkable

Here are the main features available in App Inventor and Thunkable that utilize AI:

| Image Recognition | Speech Recognition | Language Translation | |

| App Inventor¹² |

|

|

|

| Thunkable |

¹ Artificial intelligence blocks are not natively on the App Inventor platform and need to be downloaded as extensions. Official extensions are available here. Learn how to integrate extensions in your App Inventor platform here.

² Machine Learning for Kids, a website where you can train your own machine learning models, can integrate with App Inventor. Watch this video for an example on how to build your first model. After you train your first model, you'll be able to import your ML4K project as an App Inventor extension.

As you can see, the functions available in App Inventor and Thunkable are a bit basic; you won’t be training your own AI model without outside integration.

However, in Coding 13: Cloud Storage and APIs, you’ll learn about what APIs are and how to connect other resources to your own app. You can find other programs out there that will give you more control of your AI model and integrate them to your app that way instead.

More resources can be found in the Additional Resources section of this lesson.

Activity: Image Recognition

Let's code a simple image recognition app. The steps for App Inventor and Thunkable are outlined in their respective sections:

App Inventor

Thunkable

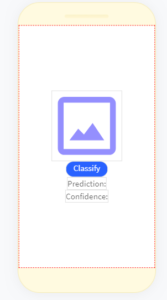

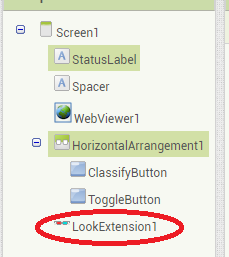

Setting Up 1

First, let’s get all our screen components in place.

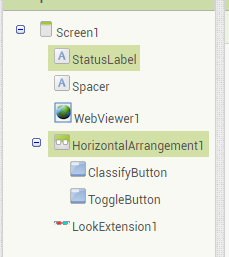

We have two Labels, a WebViewer, two Buttons within a Horizontal Row, and the LookExtension (more on that in Step 2).

Setting Up 1

First, let’s get all our screen components in place.

We have an Image, a Button, two Labels, a Camera, and an Image Recognizer.

---

Setting Up 2

You’ll notice that there’s a LookExtension1 that’s not normally available in App Inventor. Here is the official list of App Inventor Extensions. Here are the instructions on how to import an extension into your project.

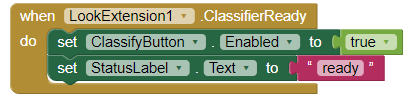

Coding 1

There are two buttons available to the user: Classify and Toggle. Here are the tasks we want to accomplish:

- Let the user from using the Classify button when LookExtension is ready. (The LookExtension is that it needs a bit of time to load and ready up.)

- When the Classify button is clicked, it should call LookExtension to analyze what the camera sees.

- When the Toggle button is clicked, the camera that the app uses should switch to another available camera on the device.

- Then finally, once LookExtension has analyzed the image from the camera, the app should tell through the StatusLabel what its prediction is.

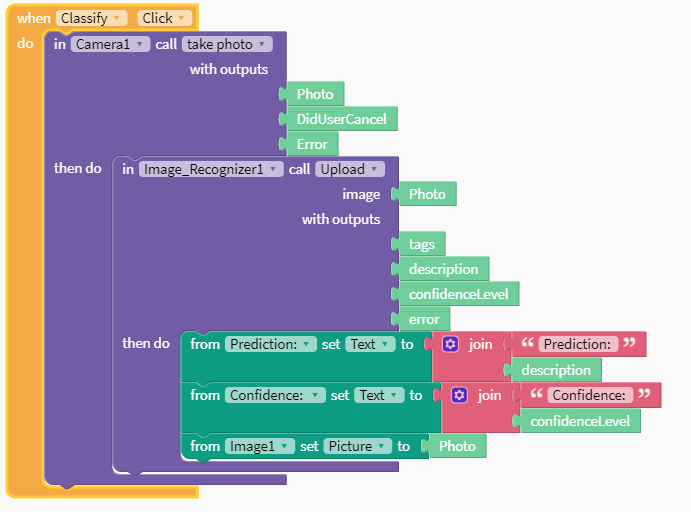

Coding 1

There is one button available to the user: Classify. Here are the tasks we want to accomplish:

- When the Classify button is clicked, the Camera should first take a picture.

- Then Image Recognizer should analyze the image from the camera we got from the camera.

- Finally, the app should show the user the photo, inform the user what Image Recognizer predicts the photo is, and how confident the app is in its prediction. We want to inform the user by setting the Image displayed and changing the text Labels.

---

---

1. Let the user use the Classify button when LookExtension is ready.

Hint: Look through the blocks available in LookExtension and see if there are any that would be useful for you.

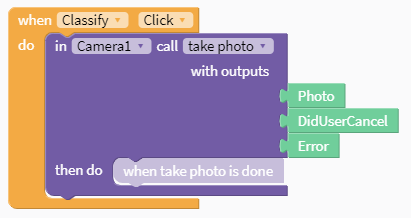

1. When the Classify button is clicked, the Camera should first take a picture.

Hint: Where should we look when we want something to happen when a button is clicked?

---

---

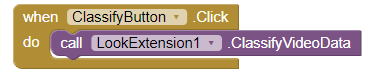

2. When the Classify button is clicked, it should call LookExtension to analyze what the camera sees.

Hint: Nothing too fancy here. "When a Button is clicked, do something." How do we do that?

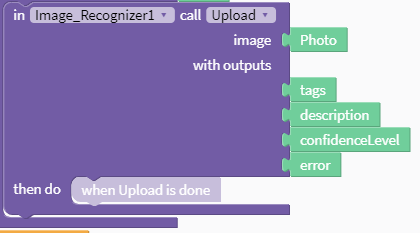

2. Then Image Recognizer should analyze the image from the camera we got from the camera.

Hint: Look in the blocks under Image Recognizer. Which one would be useful here? What input does it need?

---

---

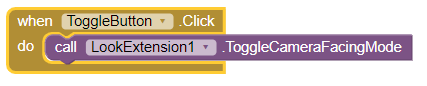

3. When the Toggle button is clicked, the camera that the app uses should switch to another available camera on the device.

Hint: Another button! Remember to look in the available blocks under LookExtension.

3. Finally, the app should show the user the photo, inform the user what Image Recognizer predicts the photo is, and how confident the app is in its prediction. We want to inform the user by setting the Image displayed and changing the text Labels.

Hint: We’re changing two labels and one image here. Use blocks from the appropriate section.

---

---

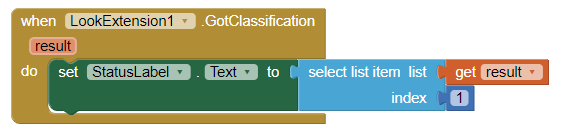

4. Once LookExtension has analyzed the image from the camera,, the app should tell through the StatusLabel what its prediction is.

Hint: LookExtension stores its predictions as a list of possible answers. This list is named “result” and its most confident prediction is the first item in this list.

---

You’re ready to test!

Connect your mobile device to Thunkable and try it out!

Coding 2

We also need to handle how this app deals with errors.

Many apps have a way to handle errors. For example, think of when you enter the incorrect password to a login. Most of the time, it’ll say “Invalid Password, try again”.

The text there is purely for humans to understand what the app thinks is wrong. Even when things don’t go right, it’s important to understand what’s happening!

Copy the following code into your project. Read through it and make an effort to understand what’s going on.

You’re ready to test!

Connect your mobile device to App Inventor and try it out!

Reflection

Once you’ve taken a few pictures with your new image recognition app, take a moment and reflect:

- What improvements to this app could you make?

- Could image recognition be used to help solve a problem in your community? What about speech recognition or other types of AI?

- Using whatever you answered with in the previous question, consider the ethics behind your solution.

- Who would be affected by your solution?

- What good could it do? What harm could it do?

- How can you make it cause the most good and least harm to direct and indirect users?

Additional Resources: Advanced Integrations

Advanced AI Integrations

Remember to go over Coding 13: Cloud Storage and APIs to learn more about integrating outside services to your app. Some of these are only compatible with more advanced coding languages (such as Java or Swift) but they’re definitely worth a look regardless if you intend to use it in your app.

- Dialogflow

- Great for creating conversational AI apps (scroll down a bit more for a video!).

- TensorFlow

- Lots of tools like transcribing handwritten numbers, pose guessing, and more!

- Google

- Google has a large library of AI tools to use such as translations, image recognition, and more. Check out this overview video for a nice summary. Note: If you decide to use these tools, be sure to double check the pricing. Some tools are free to use depending on how many users use your app.

SolveIt Series by Technovation

The SolveIt series has some more topics for you to reflect on. Here are a few that discuss about ethics and technology.

For more about ethics, you can also check out the MIT AI Ethics Curriculum

AI in Action

Technovation partnered up with VianAI and Google to do a couple workshops involving AI. Watch coding with AI in action!